Most sales teams believe lead scoring solves lead quality.

It doesn’t.

In many cases, lead scoring fails before the first sales call even happens — and not because the lead is bad, but because the score can’t be trusted.

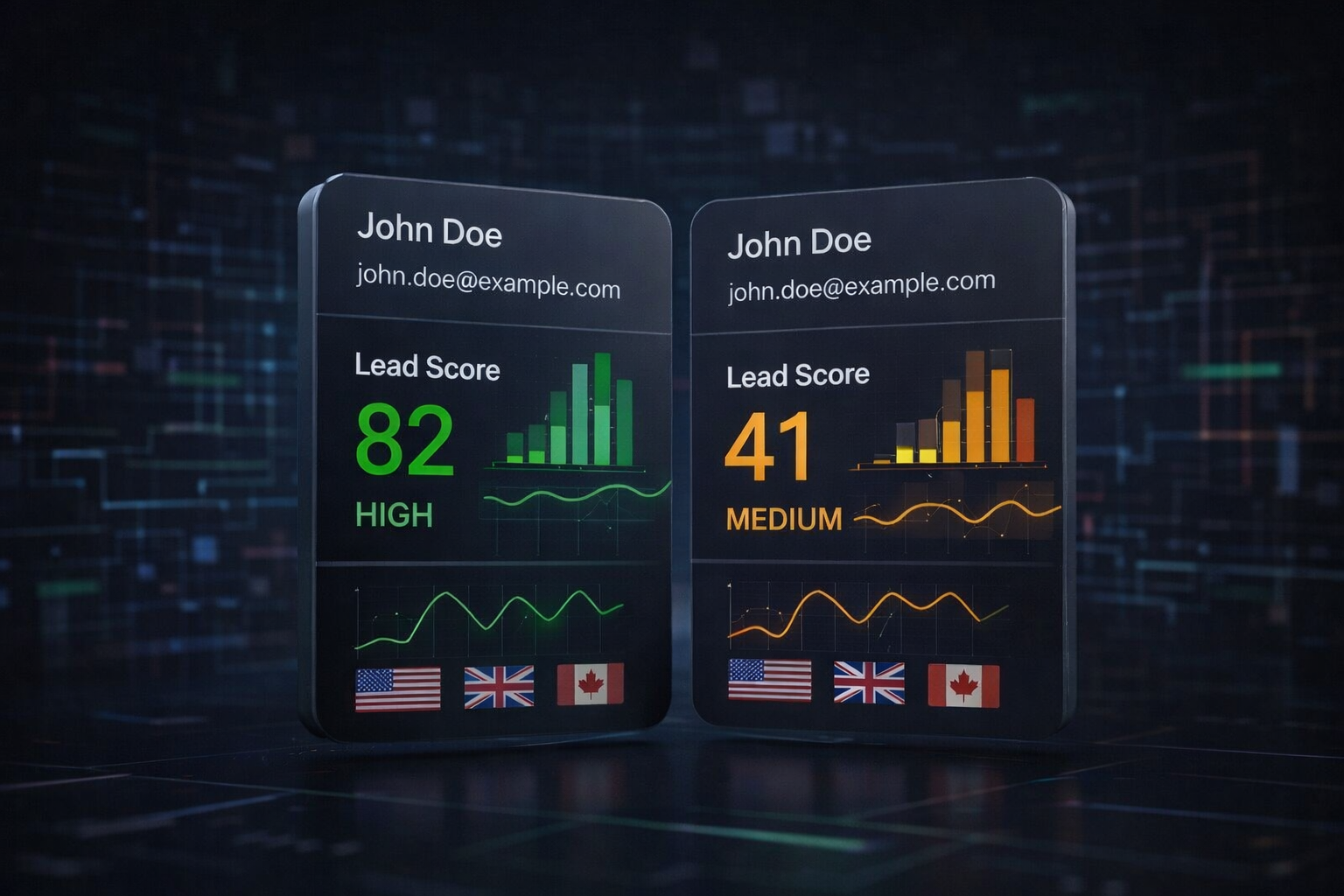

The same lead, different score

This is more common than most teams admit:

- The same lead enters two different CRMs

- Or the same CRM, but a different pipeline

- Or the same setup, but one month later

And suddenly:

- The score changes

- The priority changes

- The decision changes

Nothing about the lead changed.

Only the system did.

When the same lead gets different scores depending on where or when it’s evaluated, operations break.

This isn’t a spam problem

It’s tempting to blame:

- Fake emails

- Low-intent users

- Bad traffic sources

But that’s not the real issue.

Most inbound leads sit in a gray area:

They look valid, behave normally, and pass basic filters — yet they consistently waste sales time.

The problem isn’t detection.

It’s instability.

Scoring drift is the silent killer

Lead scoring systems tend to drift over time:

- Rules are tweaked

- Thresholds are adjusted

- New signals are added

- Old ones lose weight

Each change may seem reasonable on its own.

But collectively, they create a system where:

- Yesterday’s “high-quality lead” is today’s “medium priority”

- Historical comparisons stop making sense

- Sales loses confidence in the score

At that point, teams stop trusting the number — and start relying on gut feeling again.

When scores can’t be trusted, teams pay the price

Unstable lead scoring doesn’t just affect dashboards.

It affects people.

- Sales reps call leads they shouldn’t

- Good leads get buried

- Marketing and sales argue over “lead quality”

- Operations lose predictability

The score exists, but no one fully believes it.

And a score that isn’t trusted is worse than no score at all.

Lead scoring should be a contract, not a guess

At its core, lead scoring should behave like a contract:

- Same input → same result

- Today and six months from now

- Regardless of CRM, pipeline, or internal changes

It should be:

- Predictable

- Stable

- Auditable

Without that stability, every downstream decision is built on sand.

Before the first call, one question matters

Before adding more rules, tools, or automation, it’s worth asking:

Can you trust your lead score before the first sales call?

That question alone explains why so many teams struggle with lead quality — even when the leads themselves look fine.

We’re exploring this problem deeply at LeadFlags.